Besides JSON, jpt (the JSON power tool) can also output strings and numbers in a variety of encodings that the sysadmin or programmer might find useful. Let’s look at the encoding options from the output of jpt -h

% jpt -h

...

-T textual output of all data (omits property names and indices)

Options:

-e Print escaped characters literally: \b \f \n \r \t \v and \\ (escapes formats only)

-i "<value>" indent spaces (0-10) or character string for each level of indent

-n Print null values as the string 'null' (pre-encoding)

-E "<value>" encoding options for -T output:

Encodes string characters below 0x20 and above 0x7E with pass-through for all else:

x "\x" prefixed hexadecimal UTF-8 strings

O "\nnn" style octal for UTF-8 strings

0 "\0nnn" style octal for UTF-8 strings

u "\u" prefixed Unicode for UTF-16 strings

U "\U "prefixed Unicode Code Point strings

E "\u{...}" prefixed ES2016 Unicode Code Point strings

W "%nn" Web encoded UTF-8 string using encodeURI (respects scheme and domain of URL)

w "%nn" Web encoded UTF-8 string using encodeURIComponent (encodes all components URL)

-A encodes ALL characters

Encodes both strings and numbers with pass-through for all else:

h "0x" prefixed lowercase hexadecimal, UTF-8 strings

H "0x" prefixed uppercase hexadecimal, UTF-8 strings

o "0o" prefixed octal, UTF-8 strings

6 "0b" prefixed binary, 16 bit _ spaced numbers and UTF-16 strings

B "0b" prefixed binary, 8 bit _ spaced numbers and UTF-16 strings

b "0b" prefixed binary, 8 bit _ spaced numbers and UTF-8 strings

-U whitespace is left untouched (not encoded)Strings

While the above conversion modes will do both number and string types, these options will work only on strings (numbers and booleans pass-through). If you work with with shell scripts these techniques may be useful.

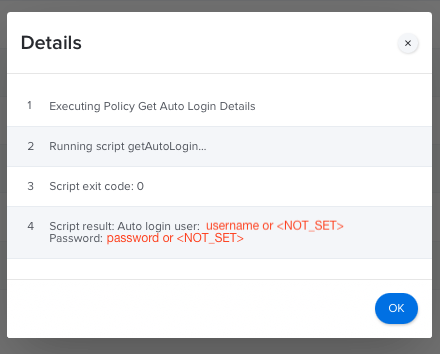

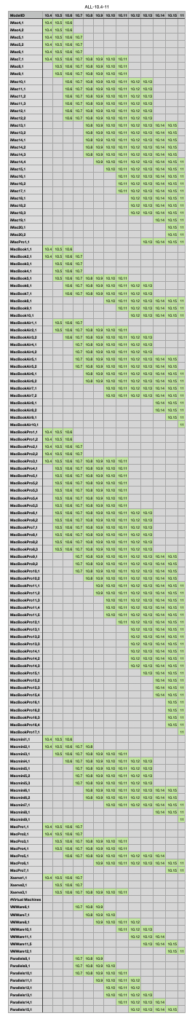

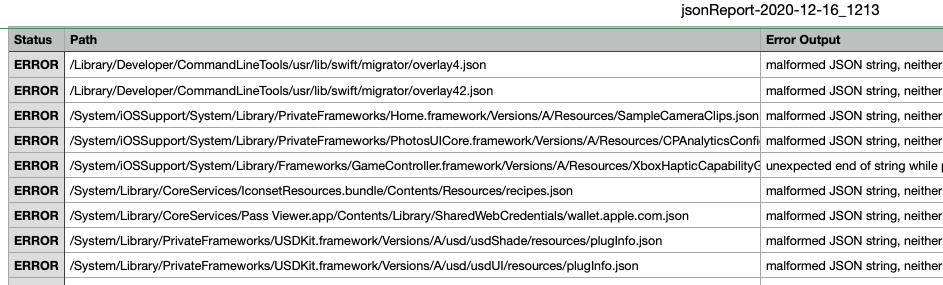

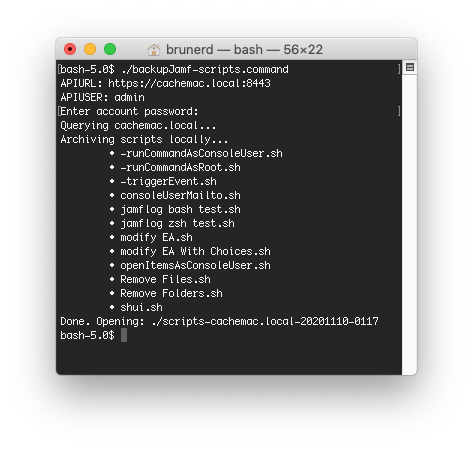

If you store shell scripts in a database that’s not using utf8mb4 table and column encodings then you won’t be able to include snazzy emojis to catch your user’s attention! In fact this WordPress install was so old (almost 15 years!) the default encoding was still latin1_swedish_ci, which an odd but surprisingly common default for many old blogs. Also if you store your scripts in Jamf (still in v10.35 as of this writing) it uses latin1 encoding and your 4 byte characters will get mangled. Below you can see in Jamf things look good while editing, fails once saved, and the eventual workaround is to use an coding like \x escaped hex (octal is an alternate)

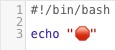

Let’s use the red “octagonal sign” emoji, which is a stop sign to most everyone around the world, with the exception of Japan and Libya (thanks Google image search). Let’s look at some of the way 🛑 can be encoded in a shell script

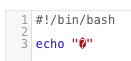

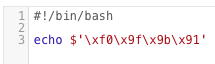

#reliable \x hex notation for bash and zsh

% jpt -STEx <<< "Alert 🛑"

Alert \xf0\x9f\x9b\x91

#above string can be in both bash and zsh

% echo $'Alert \xf0\x9f\x9b\x91'

Alert 🛑

#also reliable, \nnn octal notation

% jpt -STEO <<< "Alert 🛑"

Alert \360\237\233\221

#works in both bash and zsh

% echo $'Alert \360\237\233\221'

Alert 🛑

#\0nnn octal notation

% jpt -STE0 <<< "Alert 🛑"

Alert \0360\0237\0233\0221

#use with shell builtin echo -e and ALWAYS in double quotes

#zsh does NOT require -e but bash DOES, safest to use -e

% echo -e "Alert \0360\0237\0233\0221"

Alert 🛑

#-EU code point for zsh only

% jpt -STEU <<< "Alert 🛑"

Alert \U0001f6d1

#use in C-style quotes in zsh

% echo $'Alert \U0001f6d1'

Alert 🛑The -w/-W flags can encode characters for use in URLs

#web/percent encoded output in the case of non-URLs -W and -w are the same

% jpt -STEW <<< 🛑

%F0%9F%9B%91

#-W URL example (encodeURI)

jpt -STEW <<< http://site.local/page.php?wow=🛑

http://site.local/page.php?wow=%F0%9F%9B%91

#-w will encode everything (encodeURIComponent)

% jpt -STEw <<< http://site.local/page.php?wow=🛑

http%3A%2F%2Fsite.local%2Fpage.php%3Fwow%3D%F0%9F%9B%91And a couple other oddballs…

#text output -T (no quotes), -Eu for \u encoding

#not so useful for the shell scripter

#zsh CANNOT handle multi-byte \u character pairs

% jpt -S -T -Eu <<< "Alert 🛑"

Alert \ud83d\uded1

#-EE for an Javascript ES2016 style code point

% jpt -STEE <<< "Alert 🛑"

Alert \u{1f6d1}You can also \u encode all characters above 0x7E in JSON with the -u flag

#JSON output (not using -T)

% jpt <<< '"Alert 🛑"'

"Alert 🛑"

#use -S to treat input as a string without requiring hard " quotes enclosing

% jpt -S <<< 'Alert 🛑'

"Alert 🛑"

#use -u for JSON output to encode any character above 0x7E

% jpt -Su <<< 'Alert 🛑'

"Alert \ud83d\uded1"

#this will apply to all strings, key names and values

% jpt -u <<< '{"🛑":"stop", "message":"Alert 🛑"}'

{

"\ud83d\uded1": "stop",

"message": "Alert \ud83d\uded1"

}

Whew! I think I covered them all. If there are newlines, tabs and other invisibles you can choose to output them or leave them encoded when you are outputting to text with -T

#JSON in, JSON out

jpt <<< '"Hello\n\tWorld"'

"Hello\n\tWorld"

#ANSI-C string in, -S to treat as string despite lack of " with JSON out

% jpt -S <<< $'Hello\n\tWorld'

"Hello\n\tWorld"

#JSON in, text out: -T alone prints whitespace characters

% jpt -T <<< '"Hello\n\tWorld"'

Hello

World

#use the -e option with -T to encode whitespace

% jpt -Te <<< '"Hello\n\tWorld"'

Hello\n\tWorldNumbers

Let’s start simply with some numbers. First up is hex notation in the style of 0xhh and 0XHH. This encoding has been around since ES1, use the -Eh and -EH respectively to do so. All alternate output (i.e. not JSON) needs the -T option. In shell you can combine multiple options/flags together except only the last flag can have an argument, like -E does below.

#-EH uppercase hex

% jpt -TEH <<< [255,256,4095,4096]

0xFFa

0x100

0xFFF

0x1000

#-Eh lowecase hex

% jpt -TEh <<< [255,256,4095,4096]

0xff

0x100

0xfff

0x1000

Next up are ye olde octals. Use the -Eo option to convert numbers to ye olde octals except using the more modern 0o prefix introduced in ES6

-Eo ES6 octals

% jpt -TEo <<< [255,256,4095,4096]

0o377

0o400

0o7777

0o10000Binary notation debuted in the ES6 spec, it used a 0b prefix and allows for _ underscore separators

#-E6 16 bit wide binary

% jpt -TE6 <<< [255,256,4095,4096]

0b0000000011111111

0b0000000100000000

0b0000111111111111

0b0001000000000000

#-EB 16 bit minimum width with _ separator per 8

% jpt -TEB <<< [255,256,4095,4096]

0b00000000_11111111

0b00000001_00000000

0b00001111_11111111

0b00010000_00000000

#-Eb 8 bit minimum width with _ separator per 8

% jpt -TEb <<< [15,16,255,256,4095,4096]

0b00001111

0b00010000

0b11111111

0b00000001_00000000

0b00001111_11111111

0b00010000_00000000If you need to encode strings or numbers for use in scripting or programming, then jpt might be a handy utility for you and your Mac and if your *nix has jsc then it should work also. Check the jpt Releases page for Mac installer package download.